Here’s a question many Chicagoland business leaders have not had to answer yet:

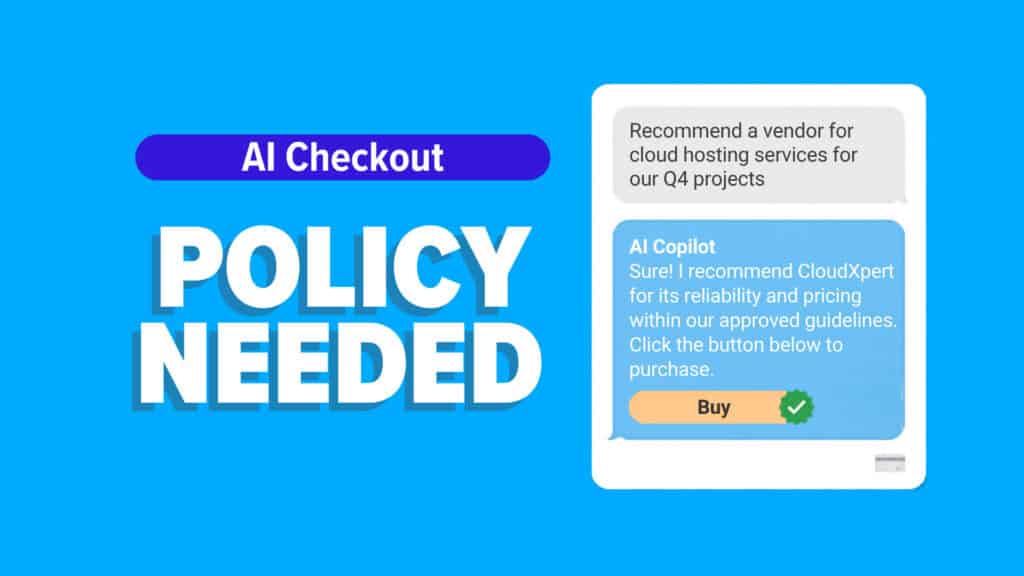

If someone on your team buys something inside an AI chat window, is that okay with you?

Because that is no longer a far-off idea. It’s already showing up.

Most teams know AI tools like ChatGPT and Microsoft Copilot for writing, summarizing, and quick answers. The next step is more operational, and more sensitive:

Purchasing.

What’s changing

OpenAI introduced an experiment called Instant Checkout, where some shopping journeys could be completed inside ChatGPT for participating sellers. At the same time, OpenAI’s merchant guidance now emphasizes product discovery with checkout completed on merchant-owned sites or apps, rather than a standalone “buy in chat” flow.

Microsoft is also pushing forward with Copilot Checkout. Microsoft has described it as an in-chat purchase flow that can appear when a Copilot conversation leads naturally to shopping, with early partner activation across PayPal, Shopify, and Stripe.

From a product standpoint, it’s easy to see the appeal. Less friction. Faster decisions. Fewer abandoned carts. Microsoft is explicitly building for “no redirect” buying experiences inside Copilot.

For consumers, that convenience is the whole point.

For businesses, it raises a different set of questions.

The simple question: do you want employees buying this way?

In many organizations, purchasing is slow on purpose.

There are approvals. Budgets. Vendor lists. Controls. Someone checks what’s being bought, why it’s being bought, and which account is paying for it.

AI checkout inside a chat window can quietly bypass the “pause points” your process depends on, especially when people are moving fast and trying to solve a problem in the moment.

It’s not that employees are trying to break rules. It’s that the workflow encourages them to keep going.

If your policies do not mention AI-driven checkout, most people will assume it’s fine.

The less obvious question: what data is involved?

To make checkout work, payment details, shipping information, and account context need to be part of the flow in some way.

Even when the platforms involved are reputable, the business question is not “are PayPal, Shopify, or Stripe trustworthy?” It’s “do our policies and controls account for this buying path?”

A few practical examples leaders should be able to answer:

- If an employee is signed into Copilot with a work account, what purchasing identity is that tied to?

- Which payment methods are permitted, and where are they stored?

- Are purchases logged somewhere central, or do they disappear into email receipts and individual accounts?

- If a purchase creates a new SaaS subscription, who owns renewals, cancellation, and access?

The behavior shift: when buying gets easier, spending expands

This is the part that can sneak up on you.

When the purchase path is frictionless, people buy more. Not because they are careless, but because the “effort tax” that used to slow decisions down is gone.

That is great for sellers. It can quietly inflate costs for you if nobody is watching.

This is not “good” or “bad.” It needs a decision.

AI checkout features do not arrive with a big warning label that says “update your policies.”

They appear. People experiment. A few purchases go through. Then a leader finds out after the fact.

A better approach is simple: decide on purpose.

If you allow it, add guardrails that match your current purchasing discipline

If you want your team to use AI checkout, treat it like any other purchasing channel and define the rules:

- Who can buy (by role, department, or spending threshold)

- What can be bought (approved categories, approved vendors)

- Which accounts are allowed (work identity only, no personal accounts)

- Which payment methods are allowed (corporate card only, not stored personal cards)

- Where visibility lives (centralized logging, receipt routing, and periodic review)

- How subscriptions are handled (owner, renewal alerts, offboarding steps)

This is where Copilot governance and controls matter. Convenience is fine, but only if it sits inside clear boundaries.

If you do not allow it, say that clearly and enforce it

If you do not want AI checkout used, that decision needs to be written down and reinforced.

Otherwise, people will assume:

- “If it’s there, we’re allowed to use it.”

- “If IT didn’t block it, it must be okay.”

Clarity beats surprise.

Where to start if you want a practical framework

If your organization already has a purchasing process, do not reinvent it. Extend it.

A helpful lens is the same one you would use for any tech buying decision: rules, accountability, and verification. If you want a broader guide to evaluating technology partners and purchase decisions, the IT Services Buyer’s Guide is a good baseline.

The real question

The question is not whether your team can use AI checkout.

It’s whether you have decided if they should.

If you want help setting clear guardrails for AI purchasing, and making sure your policies, accounts, and approvals match how people actually work, get in touch.