Here’s a slightly uncomfortable question for Chicagoland leaders:

Do you know which AI tools your team is using at work, and what they’re putting into them?

Most leaders think they do. Then we look closer and find a mix of approved tools, personal accounts, and random browser-based AI apps that popped up last week.

Generative AI has slipped into daily work fast. People use it to draft emails, summarize long documents, rewrite policies, create proposals, and brainstorm ideas. That’s not the problem.

The problem is speed. Adoption moved faster than governance.

The hidden issue: “shadow AI”

In many organizations, a chunk of AI usage happens through personal accounts or unsanctioned apps. This is often called shadow AI.

It means staff are pasting text, uploading files, or rewording sensitive content inside systems the business does not manage. That also means you can’t easily see it, audit it, or enforce consistent rules around it.

Most of the time, there’s no bad intent. It’s a smart employee trying to move faster.

But the risk is real, because prompts are not just questions. Prompts are data.

What tends to get shared without people realizing

When teams get comfortable with AI, the line between “harmless” and “sensitive” blurs. We see people drop in:

- Customer names, identifiers, or details from emails

- Internal docs, meeting notes, or incident summaries

- Pricing, margin notes, vendor terms, or contract language

- Screenshots and PDFs that include confidential information

- “Quick” troubleshooting details that accidentally include credentials or access paths

In regulated environments like healthcare, insurance, education, government, and nonprofit, the compliance angle matters too. If uncontrolled AI use violates internal policy, client expectations, or data handling requirements, it creates risk without any obvious alarm bell.

This is how organizations get surprised. Not by an attacker “breaking in,” but by information drifting out through everyday work habits.

AI risk often looks like a normal person making a normal mistake

A lot of leaders picture AI risk as something external.

In reality, the common failure mode is simple:

Someone copies and pastes the wrong thing into the wrong box, at the wrong time.

That can be enough to create exposure, reputational damage, or a compliance headache. Even if no one meant harm.

The answer is not banning AI

Banning AI usually fails for one reason: people still need to get work done. If the approved path is unclear, they will find a path.

The better approach is governance. Practical, lightweight, and easy to follow.

Governance means:

- You choose which tools are approved for work use

- You set clear rules for what can and cannot be shared

- You add visibility so usage is not invisible

- You train staff with plain-language examples, not scare tactics

In other words, AI becomes part of operations instead of a side hobby that spreads without boundaries.

A simple AI governance checklist for Chicagoland leaders

If you want a clean starting point, here’s what we recommend:

1) Name the approved tools.

Write down what’s allowed. If you are standardizing on Microsoft, define the approved Copilot experiences and where they should be used.

This is where Copilot governance and controls matters most. You want the benefits without the data drifting into unmanaged places.

2) Define “safe to paste” in one page.

Make it obvious. Give examples of what is allowed and what is not. Staff do better with examples than with vague rules.

Good “do not paste” categories typically include:

- Customer data and protected information

- Credentials, access links, or admin details

- Financial account details

- Contracts, pricing strategies, and sensitive negotiations

- Anything you would not feel comfortable forwarding outside the company

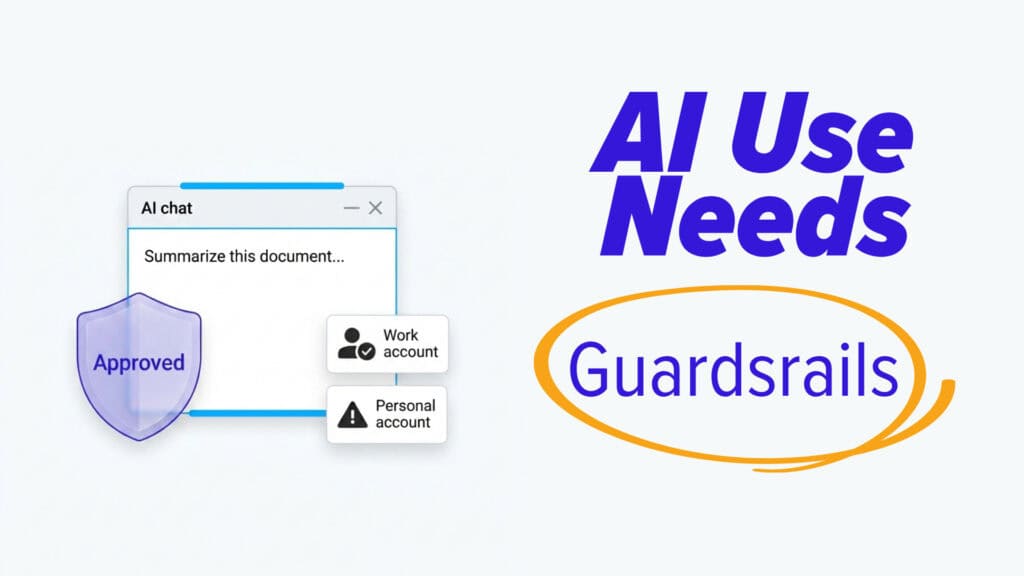

3) Decide how accounts should be used.

Personal accounts for work content is where things get messy fast. Decide what “work account only” means in practice and what exceptions, if any, exist.

4) Add a simple approval lane for high-risk use.

If someone wants to use AI for a policy rewrite, a contract summary, a board memo, or anything sensitive, give them a quick way to check in before sharing data.

5) Make training short and recurring.

One long session per year does not match how people learn tools. Short reminders, quick examples, and a few “what not to do” scenarios go further.

6) Track and review.

You do not need to police every prompt. You do need to know what tools are in use, where the pressure points are, and whether teams are pushing sensitive work into the wrong places.

Make AI adoption intentional

Most teams do not need more AI hype. They need clarity.

If you want AI to boost productivity without turning into a blind spot, treat it like any other business tool: set the rules, make them easy to follow, and keep the guardrails practical.

If you’re building a plan, a secure AI rollout for SMBs is a solid target. Start with a small set of approved tools, clear data rules, and a training loop that respects busy people.

If you want help defining approved tools, setting policies, and rolling out training that actually sticks, get in touch.